The Right Task for the Job

The first task with any machine learning problem is to outline your goal. What are you trying to accomplish, what data will be available, and what compute resources will be available to you? After you have a rough idea of your goals and resources, the next step is to narrow down your model choices.

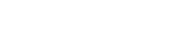

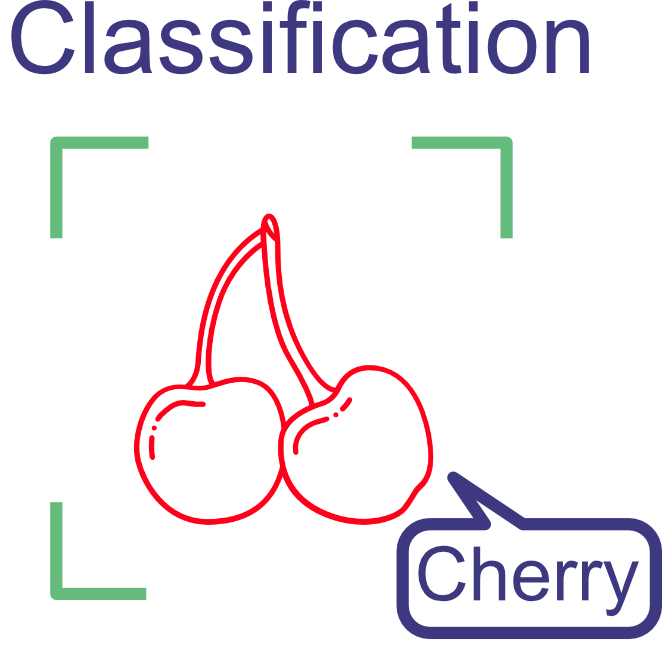

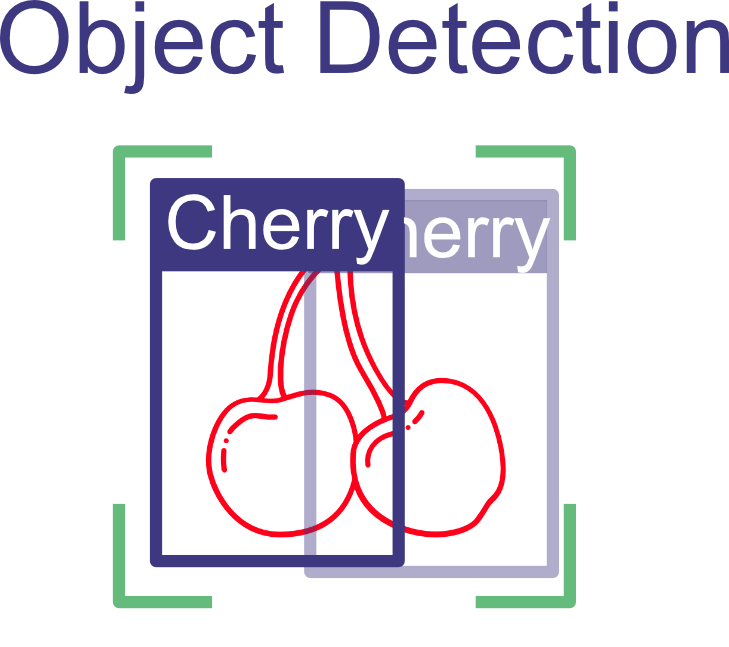

In Module 1, we looked at the mechanics behind how machines interpret images and explored an example of image classification. Now, we’ll explore the major types of computer‑vision tasks and models, each with its own strengths and ideal applications. Selecting the most appropriate model for a given task is key to achieving successful results in any computer‑vision project.

Choosing the Best Task

| Task | Benefits | Challenges |

|---|---|---|

|

Relatively Simple: Easier to understand and implement than object detection or segmentation models. Computationally Efficient: Often the fastest and least computationally intensive to train and run inference (make predictions) on. Simple Annotation: Annotating images to create training data is relatively easy. |

Limited Context: Only identify the dominant object in a picture and don’t provide accurate locations. Challenges with Multiple Objects: May struggle if an image contains several objects of interest. No Detailed Localization: Cannot pinpoint the boundaries of an object. |

|

Detailed Context: Provide both object classification and accurate bounding boxes. Multiple Objects: Can handle scenarios with several objects in a single image. Versatile Applications: Useful for tasks like self‑driving‑car systems, inventory monitoring, and security applications. |

Higher Complexity: More complex to train and implement than basic classification models. Computational Cost: Can be more computationally demanding, especially for real‑time applications. Occlusion Sensitivity: Difficulty in detecting objects if they are partially blocked or overlapping. |

|

Pixel‑Level Detail: Provide the most precise object outlines and boundaries. Complex Scenes: Excellent for understanding complex images with overlapping or multiple objects. Diverse Applications: Critical for medical image analysis, autonomous navigation, and satellite imagery analysis. |

Maximum Complexity: Among the most complex computer‑vision models to implement and train. Significant Computational Demand: High processing requirements, potentially making them unsuitable for real‑time applications. Data‑hungry: Often require large and meticulously labeled datasets for accurate training. |

|

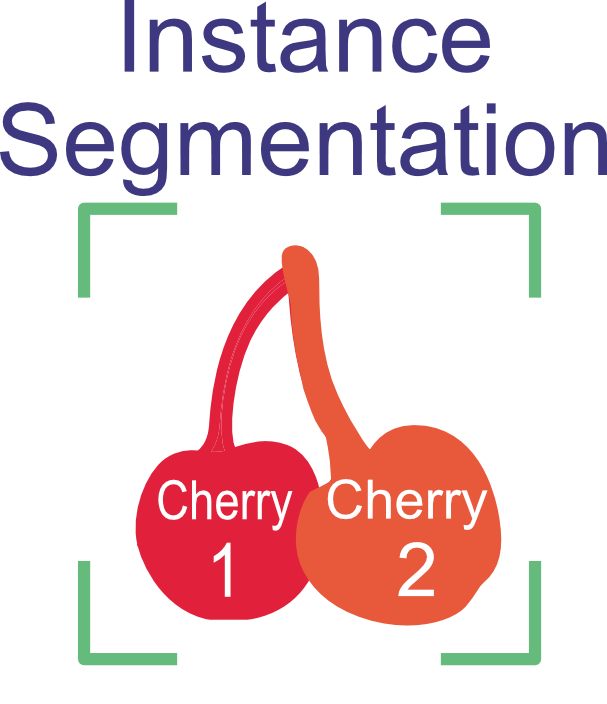

Combines the benefits of object detection and semantic segmentation. Use where counting object instances or tracking individual instances is needed. |

Most complexity: Training data and model training are the most complex tasks discussed. |

Important Task Selection Considerations

Task Requirements: The primary deciding factor in model choice is always the specific problem you’re trying to solve.

Speed vs. Accuracy: There is often a trade‑off between model complexity and how fast it can make predictions. Faster models may be less accurate, and higher‑accuracy models may be slower.

Available Resources: Larger and more complex models typically require more computational power and training time.

Return to Module 2 or Continue to Implementing Computer Vision Models with Python APIs